From retail to manufacturing, images taken from space can provide useful information about markets. However, before machine analysis can produce insights for you, you may have to address a few technical challenges.

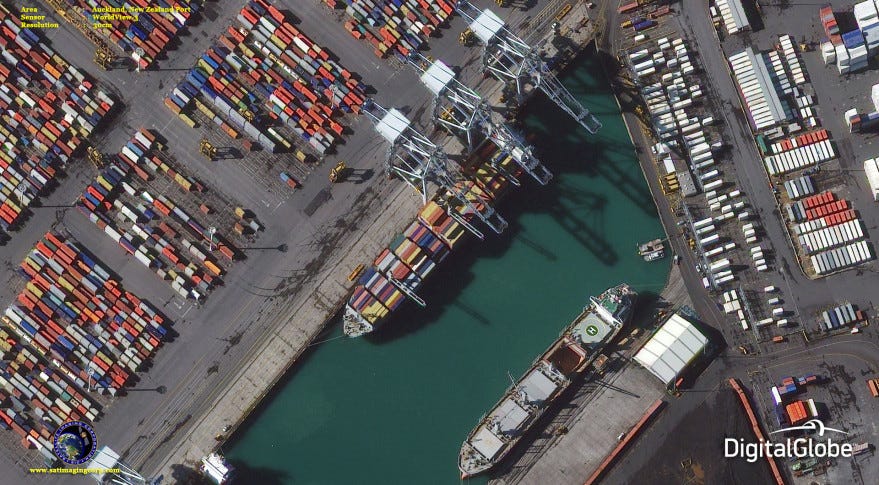

Satellite image providers tend to showcase their best images, which should be relatively easy to analyze:

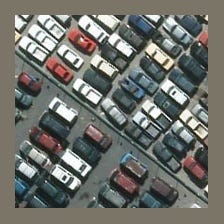

Various methods for analyzing of high quality, high-resolution overhead images for specific tasks, such as vehicle detection, have been covered extensively. A great example of such projects is “Cars Overhead with Context” (COWC). Their set contains 32,716 unique annotated cars and 58,247 unique negative examples from different geographical location and produced by different image providers. We tested this model on RS Metrics retail data, and found it to work well (90% detection rate) on high quality images such as these:

However, you often receive images that don’t look like any of the samples above. Picture quality may be affected by clouds, lighting, positioning, even processing errors.

Data quality This is an uncompressed image of a Bed Bath & Beyond parking lot, clipped from an actual satellite photo from one of the top data providers:

For some applications (e.g. analysis of crops, large construction projects), the spatial quality problem may be alleviated through high revisit rates — as “missing” data can be interpolated from multiple images.

I’ve seen several blog articles that emulate bad quality and low resolution by applying gaussian blur to high-quality images, before testing the effectiveness of ML models such as “COWC” on them, and drawing conclusions about their effectiveness. A model may perform reasonably well in such situations, but fail when faced with the kind of defects and artifacts that are often present in real-world commercial satellite data.

Data consistency

A familiar location may become unrecognizable due to rain or snow. As a result, your model (especially if it hasn’t been made sufficiently robust via extensive training) may not produce consistent results for the same point of interest.

Solution

While developing a new version of data processing workflow at RS Metrics, our goal was to have a system that could handle a variety of real-world situations, and perform well under the following requirements:

- images are “difficult” (see above)

- points of interest are not revisited frequently, or no value can be gained from revisits (due to rapidly changing objects in the image — such as parking lots)

- data may be inconsistent between revisits

- data may be inconsistent within a single image (for example, one half is covered by clouds)

- must work automatically with minimal human intervention

- must scale to parallel processing of hundreds of thousands or millions of images

- must perform significantly faster than humans

- must provide human-like accuracy for the majority of cases

Our new solution consists of image pre-processing, satellite data augmentation from other information sources, automated image quality ranking to determine confidence levels, multiple models (using a variety of frameworks, from Caffe to OpenCV), and an ensemble to combine the results of multiple classifiers.

Our next article will provide more detail about our approach to ML-driven data processing at RS Metrics, some performance metrics from the new workflow, as well as examples of how it handles difficult cases.

Image source:

Airbus Telecommunications Satellites

About RS Metrics:

RS Metrics is the market-leading company for satellite imagery and geospatial analytics for businesses and investors. RS Metrics extracts meaningful and ready-to-use data from a variety of location-based sources providing predictive analytics, traffic signals, alerts, and end-user applications. These data points can be widely used for decision making in financial services, real estate, retail, industrial, commodities, government, and academic research. Curious to learn more? Click here or contact us directly at: info@rsmetrics.com. You can also follow us on Twitter @RSMetrics and LinkedIn.

Originally published at www.alatortsev.com on April 17, 2018.